Basic Concepts and Representations¶

Overview¶

Introduction¶

MeloSpyLib is a general purpose Python library for encoding, analysing, visualising, and converting monophonic melody data. It combines statistical and computational approaches in a highly flexible framework which leaves the researcher (music psychologist, ethnomusicologist, MIR researchers etc.) an utmost degree of freedom while providing large amounts of reasonable defaults.

MeloSpyLib is currently developed as part of the Jazzomat Project and is based on ideas as outlined in [Pfleiderer2010], and on the mathematical framework developed in [Frieler2009].

The MeloSpyLib is not yet released and the documents here are mainly meant to give a pre- and an overview to provide some background information for using the MeloSpySuite.

General structure¶

- The MeloSpyLib consists of six inter-related modules.

The Basic Representation module entails all classes to represent melodies and metadata as well as transformations and abstraction related to them.

The Input/Output module entails classes for melody reading and writing.

The Feature Machine is a general and flexible system to extract arbitrary features from melodies, whereby features are user-definable in a modular fashion with the help of YAML configuration files.

The Pattern Retrieval module entails classes for the extraction of patterns on arbitrary abstractions of melodies, using either an NGram- or a self-similarity approach.

The Similarity module allows similarity computations on melodies, definable by user with YAML files.

The Visualization module operates on extracted features and allows several fancy visualisations.

In the following sections we will give a short introduction into the basic concept of the representation of melodies in general and of jazz solos in particular.

Core Representation of Melodical Objects¶

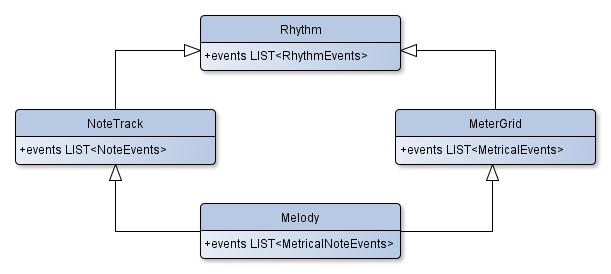

Melodies are seens as part of larger class hierarchy of music related objects (cf. Fig. 1: Core class hierarchy.). The fundamental objects are Rhythms, which are sequences of RhythmEvents.

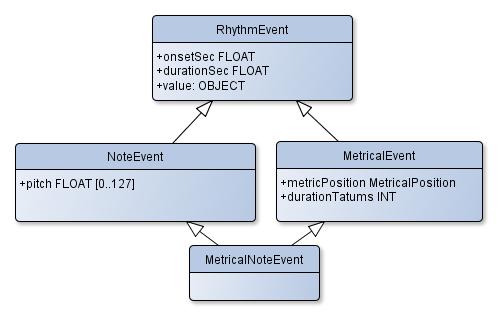

Rhythm events are conceived as triplets (onset, duration, value), where onset and duration are measured in seconds, and values can be any object, often simply a label.

The values represent things “happening” at a given time (onset), lasting a certain duration. Rhythms in this sense can be be regarded as kind of a generalized time series. However, in MeloSpy

we are mostly interested in specific musical objects, which is acheived by specifying the value of a RhythmEvent to certain types which specific properties (cf. Fig. 2: Core event hierarchy.).

Fig. 1: Core class hierarchy.¶

Note

The values of RhythmEvents are specifically implemented in the derived classes without overwriting the value property of a RhythmEvent, which can hence be still used for

some other purposes such as a string labels.

The first specialisation is pitch, represented as a (fractional) MIDI-Pitch with values between 0 and 127, where the value 60 is defined to be the Middle C (C4).

This forms the NoteEvent objects, derived from RhythmEvent and the corresponding sequence class is called NoteTrack.

Pitch, F0 and Tone Systems

The representation of pitch as a fractional MIDI number essentially means a representation of tones by a fundamental frequency F0 (or rather its psychophysical equivalent). This approach is rather simple but powerful enough to represent all conceivable tone events (or note events as they are erroneously called here). A more sophisticated approach might interpret the MIDI pitch values as integer indices into a (configurable) tone system, which can be the usual Western 12T temperament or any other system, e.g., Just Intonation, Pythagorean Tuning or some Indian raga etc. This view might get adapted in a future version of MeloSpyLib, but for the time being and the purposes of the Jazzomat Project this representation is fully sufficient.

Due to the fundamental importance of metrically bound music, which comprises most parts of jazz, popular, classical and folk music, the next specialisation is MetricalEvent. It has a

MetricalPosition, coding the metrical position (cf. Metrical System). A sequence of pure MetricalEvents is called a MeterGrid.

Finally, the class MetricalNoteEvents which derives both from NoteEvent and MetricalEvent are the fundamental objects of Melody objects, the central class of MeloSpyLib.

On the next level specific metadata and annotations are assigned to melody, which gives a Song object. From the song class more classes can derived for special needs. In the

current implementation there exists a Solo class for the purposes of the Jazzomat Project, which comprises a comprehensive set of metadata as well as several SectionsList object

members which encode phrase, chord, form, and chorus structure of a solo (cf. Annotations)

Fig. 2: Core event hierarchy.¶

Next part: Transformations.

References¶

- Pfleiderer2010

Pfleiderer, M. & Frieler, Klaus (2010). The Jazzomat project. Issues and methods for the automatic analysis of jazz improvisations. In: Bader, R., Neuhaus, C. und Morgenstern, U. (Ed.) Concepts, Experiments, and Fieldwork: Studies in Systematic Musicology and Ethnomusicology, Frankfurt/M., Bern: P. Lang, pp. 279-295.

- Frieler2009

Frieler, Klaus (2009). Mathematik und kognitive Melodieforschung. Hamburg: Verlag Dr. Kovac (Dissertation).